AI Clinical Decision

Support Redesign

Client: Optum / UnitedHealth Group

Role: Principle Product Designer

Context

Optum set out to modernize how clinicians access Clinical Decision Support (CDS) during care delivery. Traditional CDS systems rely on predefined clinical events—such as prescribing medication or reviewing lab results—to trigger passive alerts and recommendations through CDS Hooks. In addition, the sum of medical knowledge is increasing at unprecedented rates - doubling every 73 days.

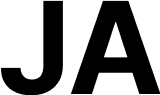

As a result, clinicians are increasingly grappling with an overwhelming amount of information, making it nearly impossible to perfectly recall the most recent clinical guidance when meeting with their patients.To address these challenges, Optum set out to build an EHR-integrated, point-of-care decision support tool for guideline-directed care and patient-specific medication recommendations.

Situation

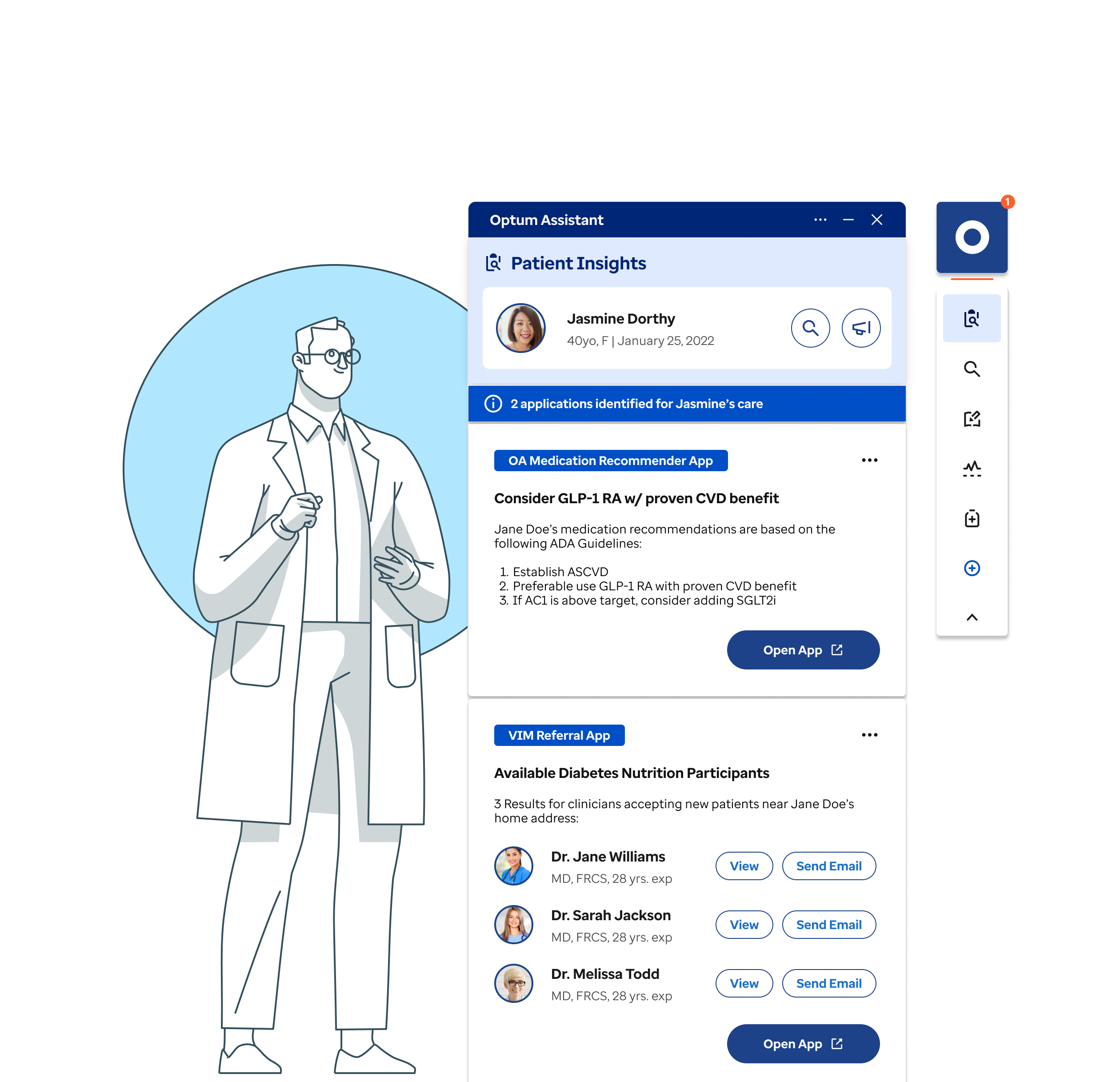

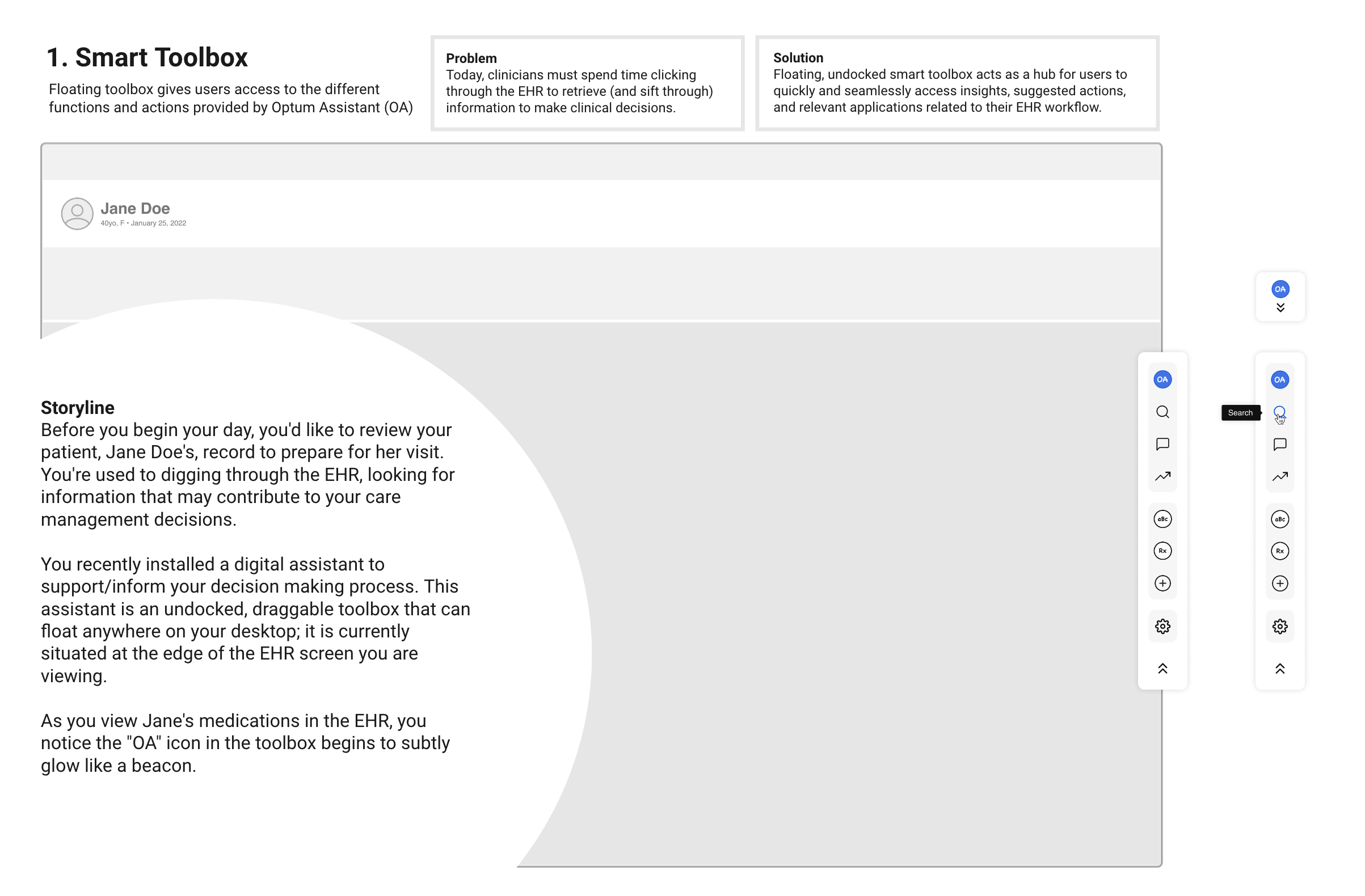

Optum developed an MVP decision-support tool for in-network clinicians, designed to deliver concise, guideline-based recommendations tailored to each patient’s conditions. However, the initial MVP largely mirrored the dense, cumbersome UI of existing EHR systems, adding complexity instead of reducing it. Our challenge was to transform this into a streamlined, context-aware experience that fits naturally into clinicians’ workflows.

Initial MVP Design

CDS Fatique

“I’ve stopped reading most CDS alerts unless I know they’re tied to something urgent. There’s just too many of them” - Hospitalist, Internal Medicine

Complication

While the new vision for an AI-enabled CDS assistant was ambitious, several critical risks needed to be addressed before development could proceed:

- Clinician adoption was uncertain. Clinicians perceived existing CDS tools as noisy, interruptive, and misaligned with actual clinical workflows. For the assistant to succeed, it needed to deliver timely, context-aware insights while reducing cognitive load rather than becoming another layer of interruption.

- Buyer confidence and scalability were open questions. Adoption depended not only on clinician usability, but on whether clinical and operational leaders—such as CMOs and health system administrators—viewed the platform as credible, scalable, and worthy of long-term investment.

- Focus and prioritization posed a real risk. Without validated insights into clinician workflows and decision-making behaviors, the team risked overbuilding features or developing functionality that does not align with actual needs.

Together, these risks pointed to a core experience design question about trust, workflow fit, and perceived value across different user groups. How might we validate and simplify an assistant-based CDS experience that clinicians would adopt, leaders would invest in, and organizations could scale with confidence?

Resolution

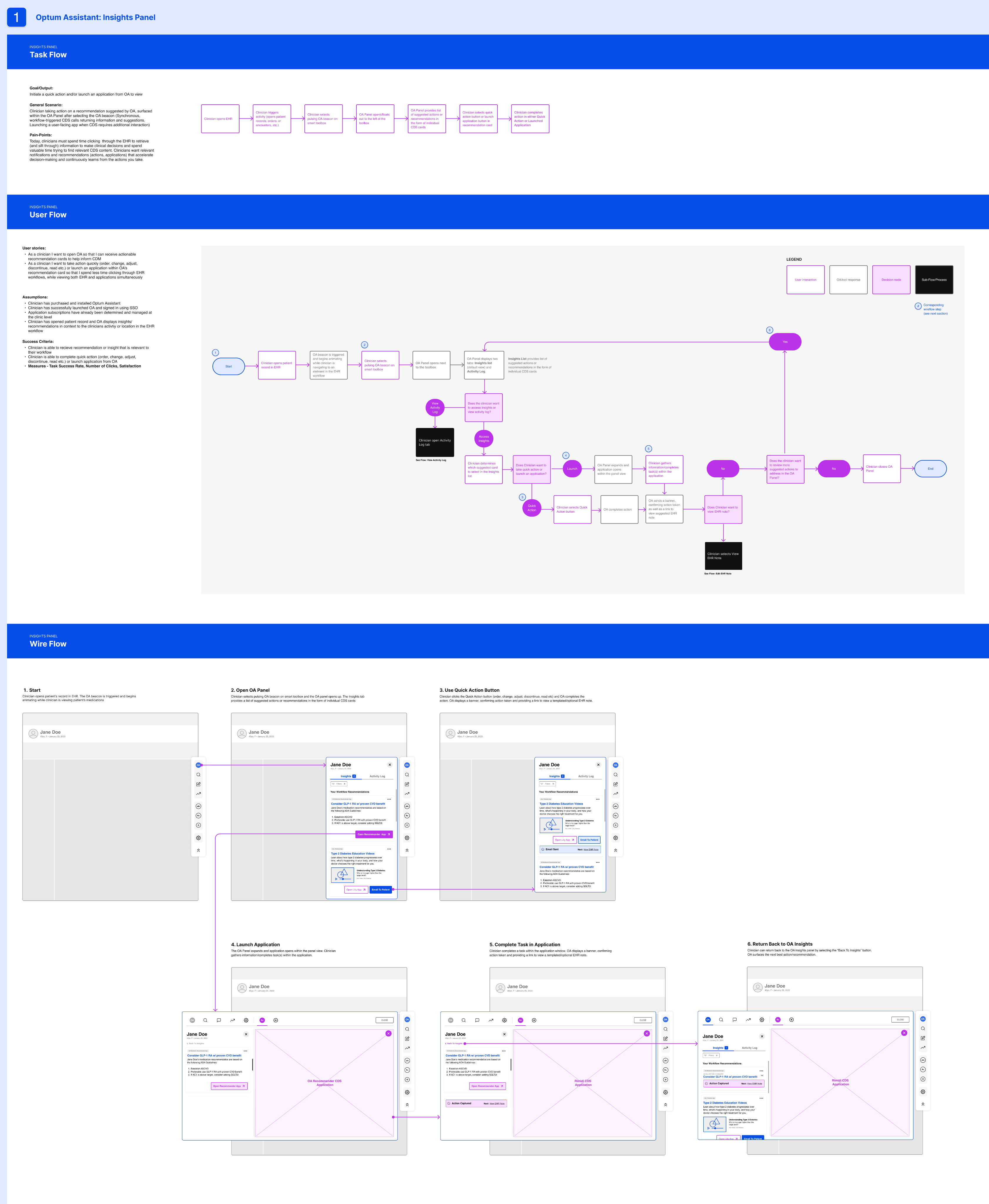

To address concerns about adoption, scalability, and focus risks identified earlier, I led UX strategy, research, prototyping, and validation to define a minimum viable assistant that met both clinical and organizational needs. This comprehensive approach ensured that the solution was scalable and trusted for future integration, reinforcing stakeholder confidence in the project's success.

Conducted targeted discovery to validate unmet needs

I interviewed 20+ clinicians and clinical leaders to understand where existing CDS tools created friction and failed to support real workflows. This research clarified unmet needs across both user groups and established a shared understanding of what "useful" decision support meant in practice, giving us confidence that the assistant could solve meaningful problems rather than add noise.

Primary Care Physician

Small Hospital System

What I need is support, not interruptions. Most tools feel like extra work instead of actual help.

Internal Medicine

Large Hospital System

I don’t want more alerts. I want meaningful insights that help me make better decisions.

Hospitalist

Medium Hospital System

I’ve stopped reading most CDS alerts unless I know it’s urgent. There’s just too many of them.

Urgent Care Doctor

Retail Clinic Chain

My workflows is unique to me. When tools know my workflow, that’s when they actually help.

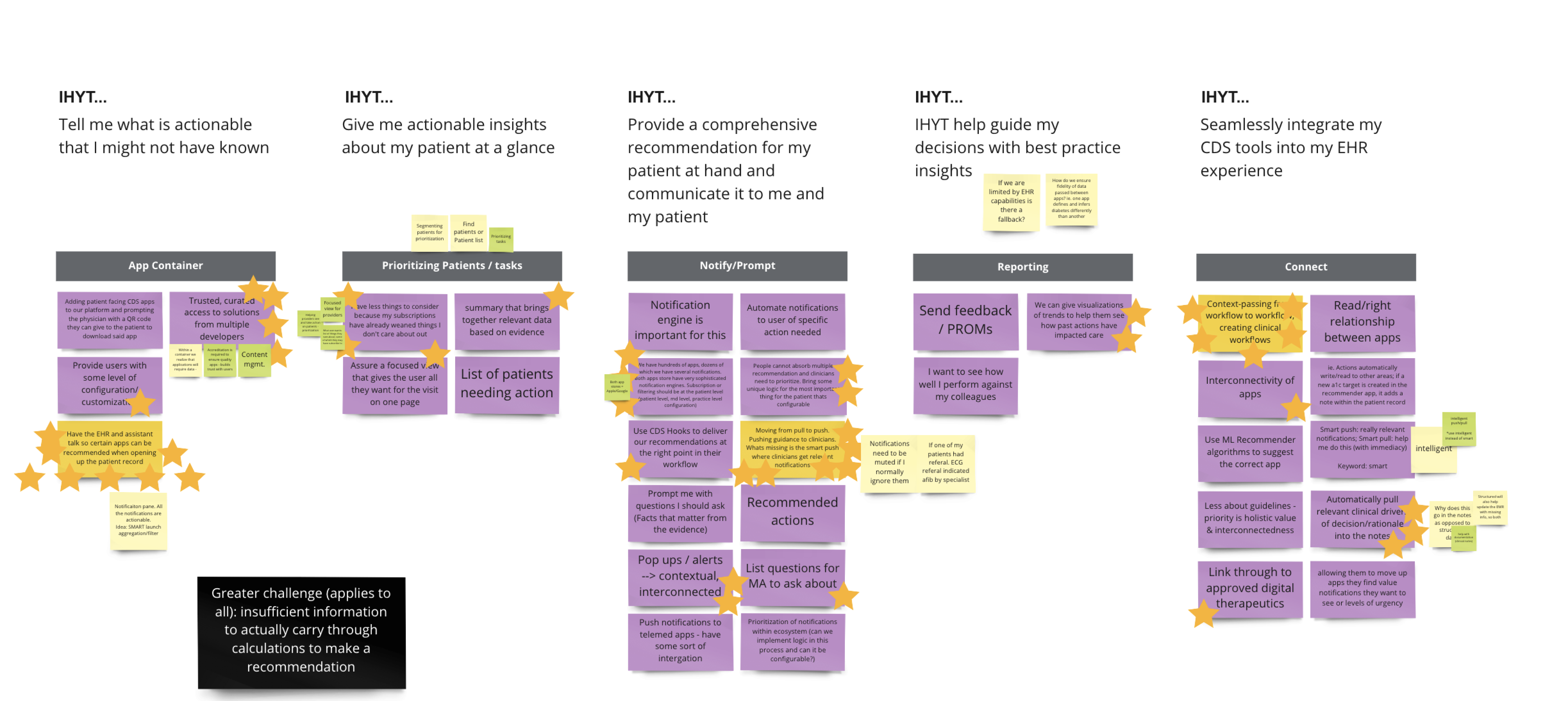

Structured ideation to reduce overbuild risk

To mitigate overbuilding and misalignment, we translated these insights into clear jobs-to-be-done and structured ideation using How Might We and I Hire You To frameworks. This approach reassured stakeholders that our prioritization was systematic and focused on outcomes, ensuring the MVP stayed aligned with clinician decision-making and buyer value.

Validated product-market fit with end users and buyers

To validate workflow fit and buyer confidence, I tested the assistant's value proposition through low-fidelity storyboards with clinicians and card-sorting exercises with clinical leaders to confirm information architecture and conceptual structure. I then led the design and testing of a mid-fidelity prototype across five core flows with five physicians. Results showed strong task success (86%), high usability (4.8/5), and strong leadership confidence (5.0/5 likelihood to recommend), confirming the experience aligned with clinician mental models and organizational evaluation criteria.

I then led the design and testing of a mid-fidelity prototype across five core flows with five physicians. Results showed strong task success (86%), high usability (4.8/5), and strong leadership confidence (5.0/5 likelihood to recommend), confirming the experience aligned with clinician mental models and organizational evaluation criteria.

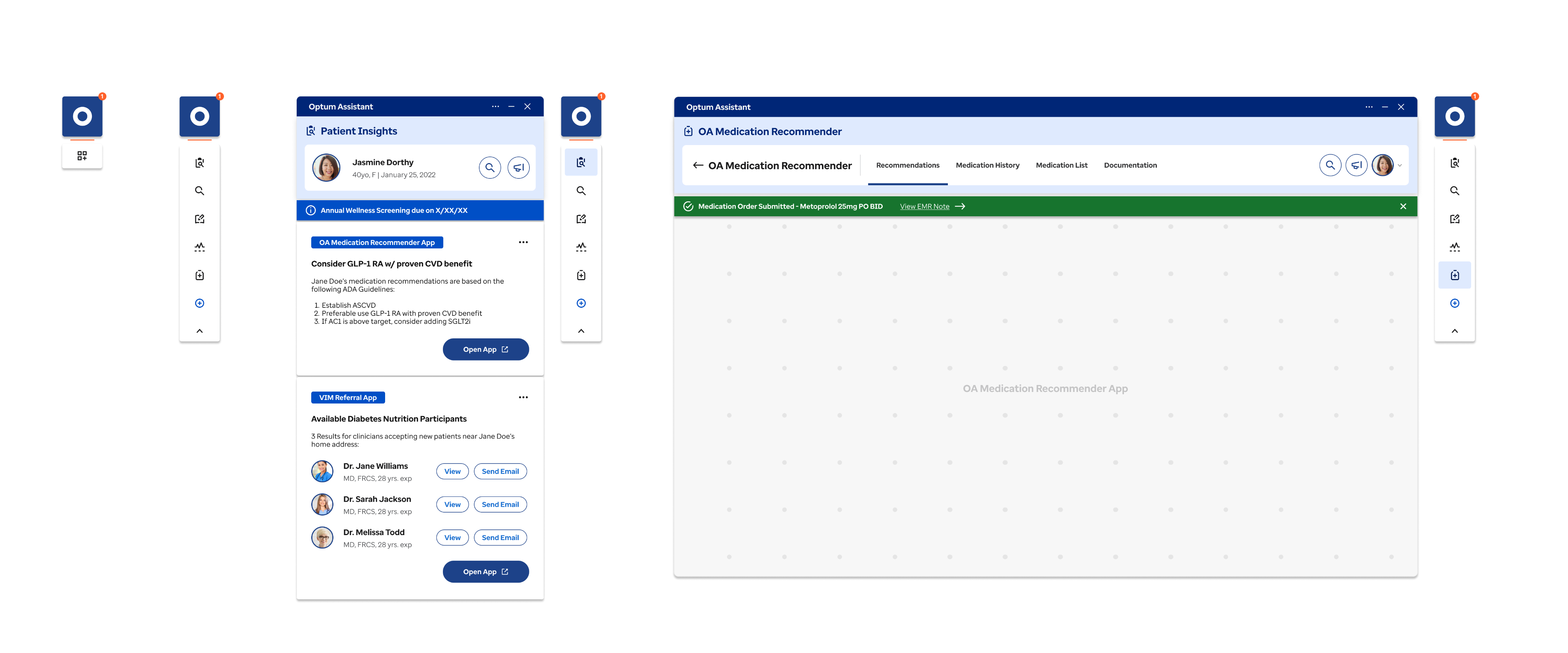

With validation in hand, we moved from mid-fidelity to high-fidelity design to prepare for implementation. Our focus shifted to refining visual hierarchy, interaction details, and accessibility standards while preserving the proven workflows and mental models from testing. The high-fidelity prototype served as a comprehensive asset for design and engineering teams.

Impact: From Discovery to Proven Product–Market Fit

By grounding the MVP in validated clinician needs and buyer expectations, the team exited discovery with high confidence to proceed into product development, addressing the key adoption, scalability, and focus risks identified upfront. This validation should reassure stakeholders of the project's solid foundation and potential for success.

- Product–market fit was validated across both clinicians and organizational stakeholders. Clinicians consistently described the assistant as intuitive, relevant, and easy to navigate, confirming alignment with real clinical workflows. Clinical leaders expressed strong interest in adoption, citing alignment with organizational goals, scalability, and ROI potential.

- Usability and task performance improved significantly. Task success reached 86% overall, with notable gains in redesigned workflows such as the Activity Log (improving from 73%). Users rated the experience highly across satisfaction (4.8/5), comprehension (4.6/5), and ease of use (4.4/5), validating that the assistant reduced cognitive load rather than adding friction.

- Adoption intent increased measurably among decision-makers. Likelihood to Request rose from 4.4 to 5.0 post-validation, and Net Promoter Score improved by 10 points (from 50 to 60), demonstrating growing trust in the assistant's value and readiness for rollout.

The team delivered all assets needed for a confident build.

Assets included a clickable prototype, annotated wireframes, usability reports, and strategic recommendations, which enabled aligned decision-making, reduced ambiguity, and a clear path into development.